How LLM Inference Works: Tokens, Context Windows, and KV Cache

Language models process tokens, not words. Learn how BPE tokenization works, what the context window really is, and how the KV cache speeds up generation — with real pricing comparisons across OpenAI, Anthropic, and Google.

Infrastructure engineer with 10+ years building production systems on AWS, GCP,…

Monday 9:18 AM -- The AWS Bill Says $4,217 for What Felt Like a Prototype

You are looking at last month's inference spend on a retrieval-augmented chatbot that services maybe 800 active users. Each conversation averages six turns. The model is mid-tier. You priced it at $0.003 per 1,000 input tokens and $0.015 per 1,000 output tokens and when you did the math on a napkin in January it came out to roughly $140 a month. The invoice in front of you is $4,217, and the support ticket you opened came back with a breakdown: 84% of the cost is input tokens, not output. The question you asked yourself -- "why am I paying to send the same system prompt 4,800 times a day?" -- is the question this guide answers.

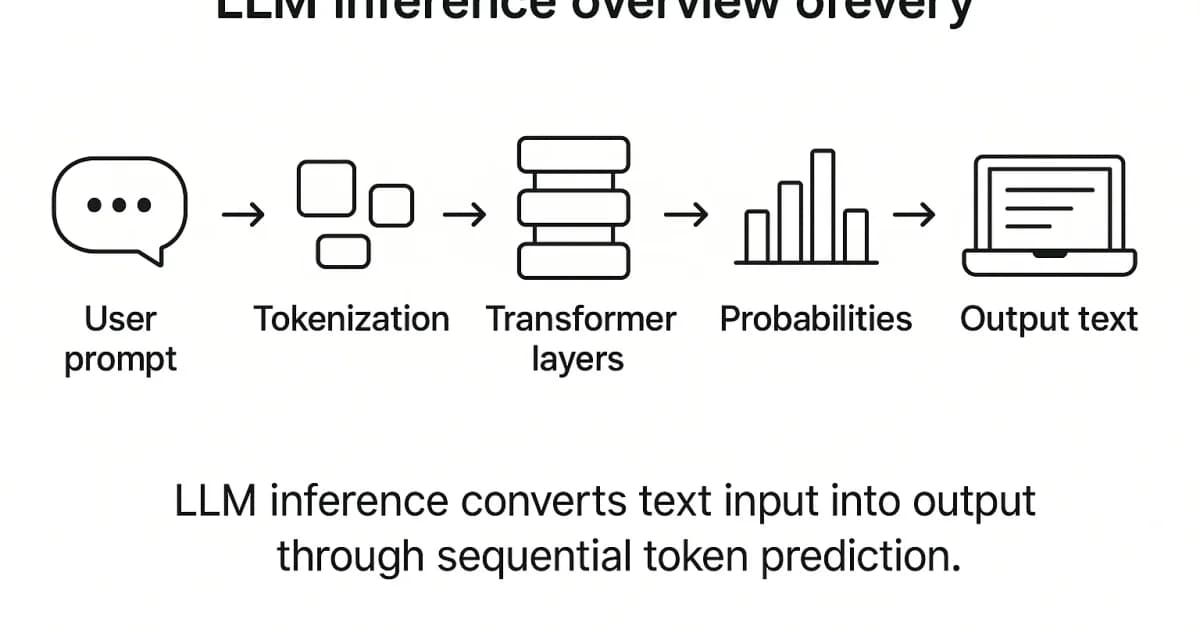

Everything unintuitive about that bill collapses into three concepts that live at the inference layer: how your text is chopped into tokens before the model sees it, what the context window really contains on every call (system prompt + tool schemas + conversation history + the new message, sent in full each time unless you cache it), and what the KV cache does inside the transformer to make generation fast -- and why it is the reason each extra token in your context gets slightly more expensive than the last. Get the mental model right and the bill, the latency profile, the RAG chunking strategy, and the prompt engineering all start making sense.

I have spent years building systems on top of language model APIs at Anthropic, OpenAI, and open-weight local deployments. The single largest knowledge gap I see in developers is right here -- at the token and KV-cache layer -- and it is usually the reason for exactly the kind of bill shock above. The rest of this article walks through the tokenizer comparison across major LLMs (GPT-4, Claude, Llama 3, Gemini), what actually fills the context window on each call, prefix caching and prompt caching features on the major APIs, and the math that lets you predict next month's bill before you deploy.

Tokens: The Unit That Drives Pricing and Limits

Tokens are not words, not characters, and not syllables. They are statistically derived chunks produced by a tokenizer algorithm -- typically 3-4 characters in English. The word "embedding" is one token in most tokenizers. The word "counterintuitive" gets split into multiple tokens. Whitespace, punctuation, and partial words all become distinct tokens, and each one maps to a numerical ID in a fixed vocabulary the model was trained on.

Here's a concrete example. The sentence "Tokenization is surprisingly important" breaks down differently across tokenizers:

| Tokenizer | Token Count | Tokens |

|---|---|---|

| GPT-4 (cl100k_base) | 4 | ["Token", "ization", " is", " surprisingly", " important"] |

| Claude (custom BPE) | 5 | ["Token", "ization", " is", " surprisingly", " important"] |

| Llama 3 (tiktoken-based) | 5 | ["Token", "ization", " is", " surprisingly", " important"] |

The exact splits vary, but the principle is the same across all modern LLMs. Common words stay whole. Rare words get broken into pieces. This is by design — it lets the model handle any text, including words it's never seen before, using a fixed-size vocabulary.

How Tokenization Works: Byte-Pair Encoding

The dominant tokenization algorithm in modern LLMs is Byte-Pair Encoding (BPE). Here's how it builds a vocabulary:

Step 1: Start with bytes

The initial vocabulary is every possible byte (256 entries). Every piece of text can be represented as a sequence of bytes, so coverage is guaranteed from the start.

Step 2: Count adjacent pairs

Scan the entire training corpus and count how often each pair of adjacent tokens appears. The most frequent pair — say, t followed by h — becomes a candidate for merging.

Step 3: Merge the most frequent pair

Replace every occurrence of that pair with a new single token. Add it to the vocabulary. The vocabulary grows by one entry.

Step 4: Repeat until target vocabulary size

Keep merging the most frequent remaining pair. After tens of thousands of merges, you get a vocabulary where common words like "the" and "is" are single tokens, and rare words are split into subword pieces. GPT-4's tokenizer has about 100,000 tokens. Llama 3 uses 128,000.

Pro tip: Use OpenAI's online tokenizer tool or the

tiktokenPython library to see exactly how your prompts tokenize. This is essential for estimating costs and staying within context limits. Anthropic also provides token counting in their API response headers.

Tokenizer Comparison Across Major LLMs

Not all tokenizers are equal. Vocabulary size, training data, and merge rules create measurable differences:

| Model Family | Tokenizer | Vocab Size | Avg Tokens per English Word | Notes |

|---|---|---|---|---|

| GPT-4 / GPT-4o | cl100k_base / o200k_base | 100K / 200K | ~1.3 | Larger vocab in GPT-4o reduces token count |

| Claude 3.5 / Claude 4 | Custom BPE | ~100K | ~1.3 | Efficient on code and structured text |

| Llama 3 | tiktoken-based BPE | 128K | ~1.2 | Expanded vocab improves multilingual efficiency |

| Gemini 1.5 / 2.0 | SentencePiece | ~256K | ~1.2 | Very large vocab, strong multilingual support |

| Mistral | SentencePiece BPE | 32K | ~1.4 | Smaller vocab means more tokens per prompt |

Watch out: Non-English text and code can tokenize very differently. Chinese text might use 2-3x more tokens than the equivalent English for the same semantic content. This directly affects cost and context window utilization. Always test your specific use case.

What Is the Context Window, Really?

Direct answer: The context window is the maximum number of tokens a model can process in a single forward pass — including both input (your prompt) and output (the model's response). It's a hard architectural limit determined by the positional encoding scheme used during training.

When people say "GPT-4o has a 128K context window," they mean the model can handle 128,000 tokens total across input and output. That's roughly 96,000 words of English text, or about 300 pages. But context window size alone doesn't tell you much about quality — most models degrade on tasks that require attending to information in the middle of very long contexts (the "lost in the middle" problem).

Context Window Sizes Across Models (2025-2026)

| Model | Context Window | Approximate English Words | Notes |

|---|---|---|---|

| GPT-4o | 128K tokens | ~96,000 | Good recall across full context |

| GPT-4.1 | 1M tokens | ~750,000 | Long-context variant |

| Claude Opus 4 / Sonnet 4 | 200K tokens | ~150,000 | Strong long-context performance |

| Gemini 2.5 Pro | 1M tokens | ~750,000 | Largest production context window |

| Llama 3 (405B) | 128K tokens | ~96,000 | Open-source, self-hosted option |

| Mistral Large | 128K tokens | ~96,000 | Competitive pricing |

The context window exists because of positional encodings. The original Transformer used fixed sinusoidal encodings that capped context at a set length. Modern models use Rotary Position Embeddings (RoPE), which can be extended beyond training length using techniques like YaRN or NTK-aware scaling — but performance typically drops when you push far beyond the trained length.

The KV Cache: Why Generation Gets Faster

Here's where it gets interesting. When an LLM generates text token by token (autoregressive generation), it needs to attend to every previous token at each step. Without optimization, generating the 1000th token would require recomputing attention over all 999 previous tokens from scratch. That's quadratic complexity, and it's brutal.

The key-value (KV) cache solves this. During the initial prompt processing (called the "prefill" phase), the model computes key and value vectors for every token in every attention layer. These vectors are stored in GPU memory. When generating each new token, the model only computes the query, key, and value for the new token, then looks up the cached keys and values for all previous tokens.

How KV Cache Works: Step by Step

Step 1: Prefill phase

The entire prompt is processed in parallel. Key (K) and value (V) vectors are computed for every token at every attention layer and stored in the cache. This is the most compute-intensive phase.

Step 2: Decode phase — first new token

The model generates one new token. It computes Q, K, V for just this token, appends K and V to the cache, and uses the full cached K and V to compute attention. Only one token's worth of computation is needed.

Step 3: Decode phase — subsequent tokens

Each new token repeats step 2. The cache grows by one entry per layer per token. Generation cost per token stays roughly constant instead of growing linearly.

Step 4: Cache eviction

When the cache fills up (hits the context window limit), the system must either stop generation or evict old entries. Some systems use sliding window eviction; others simply refuse to continue.

Pro tip: The KV cache is why "time to first token" (TTFT) and "tokens per second" (TPS) are different metrics. TTFT reflects the prefill phase — processing your entire prompt. TPS reflects the decode phase, which is much faster per token because of the cache. A 10,000-token prompt might take 2 seconds for TTFT but then generate at 80 tokens per second.

The KV cache has a real cost: GPU memory. For a 70B parameter model with 80 attention layers, caching 128K tokens of KV pairs can consume 40+ GB of VRAM — often more than the model weights themselves. This is why serving long-context models is expensive, and why providers charge more for longer inputs.

LLM API Pricing: What Tokens Actually Cost

Understanding tokens makes pricing transparent. Every major LLM API charges per token, with different rates for input (prompt) and output (completion) tokens. Output tokens are more expensive because each one requires a full forward pass through the model.

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context Window |

|---|---|---|---|

| GPT-4o | $2.50 | $10.00 | 128K |

| GPT-4.1 | $2.00 | $8.00 | 1M |

| Claude Sonnet 4 | $3.00 | $15.00 | 200K |

| Claude Haiku 3.5 | $0.80 | $4.00 | 200K |

| Gemini 2.5 Pro | $1.25 | $10.00 | 1M |

| Gemini 2.5 Flash | $0.15 | $0.60 | 1M |

| Llama 3 405B (via Together) | $3.50 | $3.50 | 128K |

| Mistral Large | $2.00 | $6.00 | 128K |

Cost Calculation Example

Say you're building a RAG chatbot that sends 3,000 tokens of context + 500 tokens of user query per request, and the model generates 800 tokens of response. Using GPT-4o:

- Input: 3,500 tokens x ($2.50 / 1,000,000) = $0.00875

- Output: 800 tokens x ($10.00 / 1,000,000) = $0.008

- Total per request: ~$0.017

- At 10,000 requests/day: ~$170/day or ~$5,100/month

Watch out: Costs scale with conversation length. In a multi-turn chat, every previous message is re-sent as input tokens on each turn. A 20-message conversation can easily hit 15,000+ input tokens per request — 4x the cost of the first message. Implement conversation summarization or truncation to control this.

Practical Implications for Developers

Prompt Design Affects Cost Directly

Every token in your system prompt is charged on every request. A 2,000-token system prompt across 100,000 daily requests means 200 million input tokens per day. At GPT-4o rates, that's $500/day just for the system prompt. Keep system prompts tight and move static context into retrieval where possible.

RAG Chunking Strategy Depends on Tokenization

When chunking documents for retrieval-augmented generation, chunk size should be measured in tokens, not characters or words. A 512-token chunk is roughly 380 English words but could be as few as 200 words in code-heavy or multilingual content. Use the model's tokenizer to measure chunks accurately.

Sliding Window and Sparse Attention Extend Context

Recent architectural innovations push past the traditional context limit. Sliding window attention (used in Mistral) lets each token attend to only a fixed window of recent tokens in some layers, dramatically reducing memory. Sparse attention patterns skip attending to every token, focusing on a learned subset. These techniques don't change the token model — they change how efficiently the model processes tokens within the context window.

Caching Reduces Costs for Repeated Prefixes

Both OpenAI and Anthropic now offer prompt caching — if consecutive requests share the same prefix (like a system prompt), the KV cache for that prefix is reused server-side, and you pay a reduced rate for those cached input tokens. This can cut costs by 50-90% for applications with static system prompts.

Frequently Asked Questions

How many tokens is a typical English word?

In English, one word averages about 1.3 tokens with modern tokenizers like cl100k_base or o200k_base. Short common words ("the", "is", "at") are single tokens. Longer or rarer words get split into subword pieces. A rough rule of thumb: 750 English words is approximately 1,000 tokens, but always verify with the specific tokenizer for your model.

Why are output tokens more expensive than input tokens?

Input tokens are processed in parallel during the prefill phase, which is highly efficient on GPUs. Output tokens are generated one at a time (autoregressively), and each requires a full forward pass through the model. This sequential generation is inherently slower and ties up GPU resources for longer, which is why providers charge 3-5x more for output tokens.

What happens when I exceed the context window?

The API will return an error — you won't get a truncated response. You need to reduce your input before retrying. Most API clients and frameworks provide token-counting utilities so you can check length before sending. Some frameworks automatically truncate conversation history from the middle or beginning to stay within limits.

Does the KV cache persist between API requests?

Not by default. Each API request starts with a fresh KV cache. However, both OpenAI and Anthropic offer prompt caching features where the server retains KV cache entries for common prefixes across requests. This is opt-in and has specific requirements — the cached prefix must be identical across requests, and there are minimum length thresholds.

How does tokenization affect non-English languages?

Most tokenizers are trained primarily on English text, so English gets the most efficient encoding. Chinese, Japanese, Korean, Arabic, and other scripts often require 2-4x more tokens per semantic unit compared to English. This means non-English users hit context limits faster and pay more per equivalent content. Newer models with larger vocabularies (like Gemini) are improving this.

What is the difference between BPE and SentencePiece?

BPE (Byte-Pair Encoding) and SentencePiece are not mutually exclusive. SentencePiece is a library that can implement BPE or Unigram tokenization. The key difference is that SentencePiece treats the input as a raw byte stream without pre-tokenization, while traditional BPE implementations first split on whitespace and punctuation. SentencePiece's approach handles multilingual text more uniformly.

Can I reduce costs by using a different tokenizer?

No — you must use the tokenizer that matches your model. Each model is trained with a specific vocabulary, and using a different tokenizer would produce meaningless token IDs. However, you can reduce costs by choosing models with larger vocabularies (which tend to produce fewer tokens for the same text), by shortening prompts, caching repeated prefixes, and using cheaper models for simpler tasks.

Conclusion

Tokens are the atom of LLM inference. Every constraint — context windows, pricing, generation speed, memory usage — traces back to the token model. BPE tokenization turns arbitrary text into a fixed vocabulary of subword units. The context window is a hard architectural limit on how many tokens the model can attend to at once. And the KV cache is the optimization that makes autoregressive generation practical by trading memory for compute.

The practical takeaway: measure everything in tokens. Estimate costs in tokens. Design chunking strategies in tokens. Monitor context utilization in tokens. The developers who understand the token layer build faster, cheaper, and more reliable LLM applications than those who treat the API as a magic text box.

Written by

Abhishek Patel

Infrastructure engineer with 10+ years building production systems on AWS, GCP, and bare metal. Writes practical guides on cloud architecture, containers, networking, and Linux for developers who want to understand how things actually work under the hood.

Related Articles

LLM Latency: TTFT, ITL, and Why End-User Latency Isn't What You Think

LLM latency decomposes into TTFT (time to first token, 300-1500ms), ITL (inter-token, 10-30ms), and total time. Each has different causes and fixes. Why streaming dominates UX, when Cerebras/Groq beat Claude on speed, and the optimization playbook.

11 min read

DevOpsPython uv vs pip vs Poetry vs PDM: Speed Benchmarks 2026

Real benchmarks: uv installs Django + ML stack in 8s vs pip's 90s, Poetry's 50s, PDM's 38s. Why uv is fast (Rust + parallelism + PubGrub), what pip still does that uv doesn't, migration paths, and where Poetry's ergonomics still win.

12 min read

AI/ML EngineeringSelf-Hosting LLMs from India: Providers, Latency & INR Pricing (2026)

A practical comparison of self-hosting LLMs on Indian GPU clouds including E2E Networks, Tata TIR, and Yotta Shakti Cloud, with INR pricing inclusive of 18% GST, latency tests from Mumbai, Bangalore, Chennai, and Delhi, and DPDP Act 2023 compliance notes.

15 min read

Enjoyed this article?

Get more like this in your inbox. No spam, unsubscribe anytime.