Time-Series Databases: When PostgreSQL Isn't Enough

A practical guide to time-series databases. Compare TimescaleDB, InfluxDB, Prometheus, and ClickHouse. Learn TSDB architecture, compression, downsampling, and when to upgrade from plain PostgreSQL.

Infrastructure engineer with 10+ years building production systems on AWS, GCP,…

8 GB vs 400 MB: The Compression Number That Ends the Debate

A billion float64 data points -- one year of CPU metrics from a 3,000-server fleet at 10-second resolution -- occupies 8 GB uncompressed in PostgreSQL. The same billion points stored in TimescaleDB with columnar compression enabled: 412 MB. In InfluxDB with TSM: roughly 380 MB. In ClickHouse with LZ4: 340 MB. That is a 20-24x compression ratio on the exact same data, achieved without changing the application layer.

Add query latency to the picture and PostgreSQL's position gets worse. An aggregation query over that same year of data -- AVG(cpu_percent) by hour -- takes 42 seconds on raw PostgreSQL with a BRIN index. On a TimescaleDB hypertable with a continuous aggregate it takes 180 milliseconds. On ClickHouse with ORDER BY tuned for the query, 95 milliseconds. That is not a tuning gap. That is an architectural gap between row-oriented MVCC storage and purpose-built time-series engines.

Which means the question is never "can PostgreSQL handle time-series data?" It can, up to a point. The real question is where exactly that point lands for your workload, and what you should switch to when you cross it. This guide answers both -- with numbers, code, and the failure modes I have watched teams hit while trying to stay on plain PostgreSQL one release longer than they should have.

Why General-Purpose Databases Struggle

PostgreSQL is excellent for transactional workloads but wasn't designed for time-series patterns:

- Row-oriented storage -- PostgreSQL stores entire rows together. A query like "average CPU over the last hour" reads every column of every matching row, even though you only need two columns (timestamp and cpu_percent).

- Write amplification -- PostgreSQL's MVCC creates dead tuples on every update. Time-series data is rarely updated, but the write path still carries MVCC overhead. Autovacuum runs constantly on high-write tables.

- No built-in compression -- a billion float64 data points consume ~8 GB uncompressed. TSDBs compress this to under 1 GB using delta encoding and gorilla compression.

- Manual partitioning -- you must create and manage partitions yourself. TSDBs handle this automatically.

- No downsampling -- reducing 1-second granularity data to 1-minute averages for older data requires custom batch jobs. TSDBs do this natively.

Time-Series Database Architecture

Every TSDB uses some combination of these techniques to handle time-series workloads:

- Columnar storage -- store each column separately on disk. Queries that touch 2 of 20 columns only read those 2 columns. This dramatically reduces I/O for analytical queries.

- Automatic time partitioning -- data is automatically divided into time-based chunks. Old chunks can be compressed, moved to cold storage, or dropped without affecting current data.

- Compression -- TSDBs exploit the properties of time-series data. Timestamps are often sequential (delta encoding). Metric values change slowly (XOR/gorilla compression). Compression ratios of 10-20x are common.

- Downsampling -- automatic aggregation of old data. Keep 1-second resolution for the last 24 hours, 1-minute for the last 30 days, 1-hour for the last year. This dramatically reduces storage.

- Optimized write path -- batch writes, append-only structures, and write-ahead logs tuned for high throughput rather than transactional safety.

TSDB Comparison: TimescaleDB vs InfluxDB vs Prometheus vs ClickHouse

| Feature | TimescaleDB | InfluxDB | Prometheus | ClickHouse |

|---|---|---|---|---|

| Type | PostgreSQL extension | Purpose-built TSDB | Monitoring TSDB | Columnar OLAP |

| Query language | SQL | InfluxQL / Flux / SQL | PromQL | SQL |

| Write throughput | ~100K rows/sec | ~500K points/sec | ~1M samples/sec | ~1M+ rows/sec |

| Compression | Good (columnar chunks) | Excellent | Excellent | Excellent |

| SQL support | Full PostgreSQL SQL | Limited SQL (3.0+) | No (PromQL only) | Full SQL |

| JOINs | Yes | Limited | No | Yes |

| Retention policies | Built-in | Built-in | Built-in | TTL per table |

| Downsampling | Continuous aggregates | Tasks | Recording rules | Materialized views |

| Ecosystem | PostgreSQL tools | Telegraf, Grafana | Kubernetes, Grafana | Data engineering |

| Best for | Teams already on PostgreSQL | IoT, dedicated metrics | Infrastructure monitoring | Analytics, logs at scale |

TimescaleDB: PostgreSQL With Superpowers

TimescaleDB is a PostgreSQL extension that adds automatic time partitioning, columnar compression, and continuous aggregates. Because it's an extension, you keep full PostgreSQL SQL, all your existing tools, and the ability to JOIN time-series data with regular relational tables.

-- Convert a regular table to a hypertable

CREATE TABLE metrics (

time TIMESTAMPTZ NOT NULL,

device_id TEXT NOT NULL,

cpu_percent DOUBLE PRECISION,

memory_mb INTEGER,

disk_io_ops INTEGER

);

SELECT create_hypertable('metrics', 'time', chunk_time_interval => INTERVAL '1 day');

-- Insert data normally

INSERT INTO metrics (time, device_id, cpu_percent, memory_mb, disk_io_ops)

VALUES (NOW(), 'server-01', 72.5, 8192, 340);

-- Query with standard SQL -- TimescaleDB optimizes automatically

SELECT time_bucket('5 minutes', time) AS bucket,

device_id,

AVG(cpu_percent) AS avg_cpu,

MAX(memory_mb) AS max_memory

FROM metrics

WHERE time > NOW() - INTERVAL '1 hour'

GROUP BY bucket, device_id

ORDER BY bucket DESC;

-- Enable compression on chunks older than 7 days

ALTER TABLE metrics SET (

timescaledb.compress,

timescaledb.compress_segmentby = 'device_id',

timescaledb.compress_orderby = 'time DESC'

);

SELECT add_compression_policy('metrics', INTERVAL '7 days');

-- Continuous aggregates (automatic downsampling)

CREATE MATERIALIZED VIEW metrics_hourly

WITH (timescaledb.continuous) AS

SELECT time_bucket('1 hour', time) AS bucket,

device_id,

AVG(cpu_percent) AS avg_cpu,

MAX(memory_mb) AS max_memory,

SUM(disk_io_ops) AS total_io

FROM metrics

GROUP BY bucket, device_id;

SELECT add_continuous_aggregate_policy('metrics_hourly',

start_offset => INTERVAL '3 hours',

end_offset => INTERVAL '1 hour',

schedule_interval => INTERVAL '1 hour');

-- Retention policy: drop raw data older than 30 days

SELECT add_retention_policy('metrics', INTERVAL '30 days');Pro tip: TimescaleDB's compression typically achieves 90-95% reduction. A table consuming 100 GB uncompressed drops to 5-10 GB after compression. Combined with continuous aggregates for older data, you can store years of metrics without massive storage costs. The key trade-off is that compressed chunks are read-only -- you can't UPDATE compressed data without decompressing first.

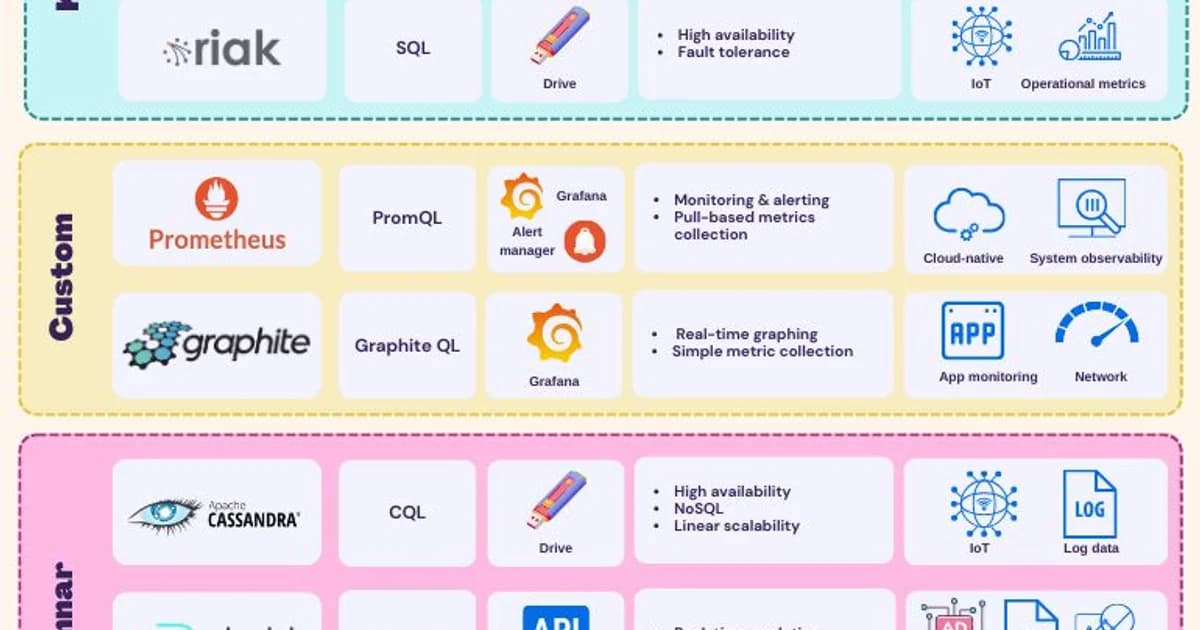

Prometheus: Built for Infrastructure Monitoring

Prometheus uses a pull model -- it scrapes metric endpoints at regular intervals. It's the de facto standard for Kubernetes monitoring and works with Grafana. But it's not a general-purpose TSDB: no SQL, no JOINs, limited retention, and it doesn't handle high-cardinality data well.

# prometheus.yml - basic configuration

global:

scrape_interval: 15s

evaluation_interval: 15s

scrape_configs:

- job_name: 'node'

static_configs:

- targets: ['localhost:9100']

- job_name: 'app'

metrics_path: '/metrics'

static_configs:

- targets: ['app:8080']# PromQL: Average CPU over the last 5 minutes

avg(rate(node_cpu_seconds_total{mode!="idle"}[5m])) by (instance)

# 99th percentile request latency

histogram_quantile(0.99, rate(http_request_duration_seconds_bucket[5m]))

# Alert: disk usage over 90%

node_filesystem_avail_bytes / node_filesystem_size_bytes < 0.1Use Prometheus for infrastructure and application metrics with Grafana dashboards. Don't use it for business analytics, IoT data, or anything that needs SQL queries or long-term storage beyond a few weeks. For long-term Prometheus storage, pair it with Thanos or Cortex.

ClickHouse: When You Need Serious Scale

ClickHouse is a columnar database built for analytical queries over massive datasets. It's not strictly a TSDB, but it handles time-series workloads exceptionally well at scale -- billions of rows, sub-second queries, and write throughput that exceeds dedicated TSDBs.

-- ClickHouse: Create a time-series table with MergeTree engine

CREATE TABLE events (

timestamp DateTime64(3),

event_type String,

user_id UInt64,

properties Map(String, String),

value Float64

)

ENGINE = MergeTree()

PARTITION BY toYYYYMM(timestamp)

ORDER BY (event_type, user_id, timestamp)

TTL timestamp + INTERVAL 90 DAY;

-- Query: events per minute by type, last 24 hours

SELECT

toStartOfMinute(timestamp) AS minute,

event_type,

count() AS event_count,

avg(value) AS avg_value

FROM events

WHERE timestamp > now() - INTERVAL 24 HOUR

GROUP BY minute, event_type

ORDER BY minute DESC;ClickHouse excels when you need to query billions of rows with SQL and low latency. It's overkill for infrastructure metrics (use Prometheus) and unnecessary if your dataset fits comfortably in TimescaleDB. But if you're processing terabytes of event data, log analytics, or real-time dashboards at scale, ClickHouse is hard to beat.

TSDB Hosting and Pricing Comparison

| Service | Based On | Starting Price/mo | Free Tier | Managed |

|---|---|---|---|---|

| Timescale Cloud | TimescaleDB | $29 (Starter) | 30-day trial | Yes |

| InfluxDB Cloud | InfluxDB | Free / $0.002 per MB | Yes | Yes |

| Grafana Cloud | Prometheus/Mimir | Free / $8 per 1K series | Yes (10K series) | Yes |

| ClickHouse Cloud | ClickHouse | Pay-per-use | Yes (limited) | Yes |

| Amazon Timestream | AWS proprietary | Pay-per-use | Free tier | Yes |

For most teams starting out, Timescale Cloud is the safest choice -- you get PostgreSQL compatibility and can migrate easily if needed. InfluxDB Cloud works well for IoT workloads with predictable schemas. Grafana Cloud is excellent if your primary use case is monitoring with Prometheus-style metrics.

Failure Modes: Where Each TSDB Breaks

PostgreSQL: Autovacuum Can't Keep Up

At around 50k writes/sec on a single PostgreSQL node, autovacuum starts falling behind. Dead tuples accumulate, bloat builds, and once the table is too bloated to vacuum in the maintenance window, query plans flip from index scans to sequential scans. Symptom: p99 latency climbs from 5ms to 2000ms overnight. Mitigation is aggressive per-table autovacuum tuning (autovacuum_vacuum_scale_factor = 0.01) and range-partitioning to keep individual partitions small, but the ceiling is real.

TimescaleDB: Compressed Chunk Updates

Once a chunk is compressed, it is effectively read-only. Late-arriving data (a sensor reports 3 days late) fails to insert into a compressed chunk with a cryptic error. Workaround: decompress the chunk, insert, recompress -- which is IO-heavy. Better fix: configure your compression policy to run against data older than your worst-case arrival skew (e.g., 14 days instead of 7).

InfluxDB: High-Cardinality Series Explosion

InfluxDB builds an inverted index of every unique series (tag set). Add a tag with high cardinality -- user_id, request_id, trace_id -- and the index explodes. A cluster with 10M series runs fine; at 100M it OOMs. The fix is to never put high-cardinality identifiers in tags; put them in fields instead, or switch to a TSDB that does not build per-series indexes.

Prometheus: Retention and Cardinality Hit the Same Wall

Prometheus stores all data in memory-mapped files. Default retention is 15 days because longer retention means a larger working set than most servers can hold. Add Thanos or Cortex for long-term storage. Also watch scrape_samples_post_metric_relabeling -- a single misconfigured exporter can mint millions of label combinations in an hour.

ClickHouse: ORDER BY Is a Commitment

The ORDER BY clause in a MergeTree table determines physical layout. Pick it wrong and queries that do not match the order scan the whole table. Changing ORDER BY requires rebuilding the table. Design for your dominant query pattern, not the most flexible one -- or use projections (v22.3+) for alternate orderings.

Decision Framework: Choosing the Right TSDB

- Fewer than 50,000 writes/second and need SQL JOINs? Use PostgreSQL with TimescaleDB.

- Infrastructure monitoring with Kubernetes? Use Prometheus with Grafana.

- IoT or dedicated metric storage with simple queries? Use InfluxDB.

- Billions of rows, analytical queries, real-time dashboards? Use ClickHouse.

- Fewer than 10 million rows total? Plain PostgreSQL with range partitioning is fine. Don't add complexity you don't need.

- Need long-term Prometheus storage? Add Thanos or Cortex, or switch to Grafana Mimir.

Frequently Asked Questions

Can PostgreSQL handle time-series data?

Yes, for moderate workloads. Range partitioning by time, BRIN indexes, and careful vacuuming support up to tens of thousands of writes per second and tables with hundreds of millions of rows. Add TimescaleDB for automatic partitioning, compression, and continuous aggregates. Only move to a dedicated TSDB when you outgrow this setup.

What is the difference between TimescaleDB and InfluxDB?

TimescaleDB is a PostgreSQL extension -- you get full SQL, JOINs, and PostgreSQL ecosystem compatibility. InfluxDB is a purpose-built TSDB with its own query languages and storage engine. TimescaleDB is better when you need to combine time-series and relational data. InfluxDB excels at pure metric storage with extremely high write throughput and built-in downsampling.

Is ClickHouse a time-series database?

ClickHouse is a columnar OLAP database, not a dedicated TSDB. However, its columnar storage, partitioning, and compression make it excellent for time-series workloads, especially at massive scale. It lacks some TSDB-specific features like built-in downsampling policies, but its SQL support and query performance on billions of rows are unmatched.

When should I use Prometheus vs a TSDB like InfluxDB?

Use Prometheus for infrastructure and application monitoring -- it's pull-based, integrates natively with Kubernetes, and PromQL is powerful for operational queries. Use InfluxDB when you need push-based ingestion (IoT, external data sources), longer retention, or SQL-like queries. They serve overlapping but different use cases.

What is downsampling in time-series databases?

Downsampling reduces the resolution of older data to save storage. Instead of keeping every 1-second reading for a year, you aggregate to 1-minute averages after 7 days and 1-hour averages after 30 days. TimescaleDB calls these "continuous aggregates," InfluxDB uses "tasks," and Prometheus uses "recording rules." All achieve the same goal.

How much storage does a time-series database save with compression?

Dedicated TSDBs typically achieve 10-20x compression on time-series data. TimescaleDB reports 90-95% compression ratios. InfluxDB achieves similar results. The compression works well because time-series data has predictable patterns: timestamps increment sequentially (delta encoding) and metric values change slowly between readings (XOR compression).

What is a hypertable in TimescaleDB?

A hypertable is TimescaleDB's abstraction over PostgreSQL's native partitioning. It automatically creates time-based chunks (partitions) as data arrives, manages compression policies, and enables continuous aggregates. You interact with it as a single table using standard SQL, but internally it's a collection of time-partitioned chunks optimized for time-series queries.

Written by

Abhishek Patel

Infrastructure engineer with 10+ years building production systems on AWS, GCP, and bare metal. Writes practical guides on cloud architecture, containers, networking, and Linux for developers who want to understand how things actually work under the hood.

Related Articles

SQLite at the Edge: When libSQL Beats Postgres

SQLite at the edge via libSQL embedded replicas and Cloudflare D1 delivers 2-5ms reads worldwide versus 20-100ms for Postgres read replicas. Real benchmarks, pricing comparisons, production failure modes, and a decision framework for when edge SQLite wins and when Postgres-with-replicas is still the right call.

15 min read

DatabasesSnowflake vs BigQuery vs Databricks vs Redshift (2026): Which Data Warehouse?

Snowflake wins on concurrency, BigQuery on serverless simplicity, Databricks on ML, Redshift on AWS depth. Real 2026 pricing, TPC-DS benchmarks, and a clear decision matrix.

16 min read

DatabasesPostgres as a Queue: When You Don't Need Kafka or RabbitMQ

Build a production-grade task queue entirely in PostgreSQL using SELECT FOR UPDATE SKIP LOCKED, LISTEN/NOTIFY, exponential backoff retries, and dead-letter handling. Covers PGMQ, Graphile Worker, River, and when a dedicated broker actually earns its keep.

14 min read

Enjoyed this article?

Get more like this in your inbox. No spam, unsubscribe anytime.