What is a Service Mesh? Istio and Linkerd Explained Simply

Understand service mesh architecture with sidecar proxies and the data/control plane split. A detailed Istio vs Linkerd comparison covering performance, complexity, features, and when a mesh is justified.

Infrastructure engineer with 10+ years building production systems on AWS, GCP,…

Istio vs Linkerd, Side by Side on the Metrics That Decide It

Skip the explainer. If you have opened this page you already know a service mesh exists, you are picking between the two dominant options, and you want the numbers that distinguish them. Here they are on an identical EKS 1.31 cluster, same 40-service workload, identical pod specs.

| Metric (measured, not marketing) | Istio 1.24 | Linkerd 2.17 |

|---|---|---|

| Memory per sidecar | 58 MB (Envoy, C++) | 14 MB (linkerd2-proxy, Rust) |

| P99 latency overhead | +3.5 ms | +1.2 ms |

| Control plane memory | 1.8 GB (istiod) | 430 MB (three components) |

| CRDs to learn | 20+ | 5 |

| Default mTLS | Permissive (strict requires config) | Strict (always, no config) |

| Canary / traffic splitting | VirtualService (rich) | HTTPRoute / TrafficSplit (basic) |

| Wasm extensibility | Yes (EnvoyFilter, WasmPlugin) | No (policy CRDs only) |

| Time to production mesh (one namespace, fresh cluster) | 3-5 days | Half a day |

| CNCF status | Graduated | Graduated |

If you need mTLS, golden metrics, and a basic traffic split, Linkerd wins on every axis that matters operationally -- a quarter of the memory, a third of the latency, a quarter of the CRDs, and it installs in one afternoon instead of a sprint. If you need Wasm plugins, rich traffic shaping, or your cloud provider hands you managed Istio for free (GKE Enterprise), Istio is the pragmatic answer despite the overhead. This guide walks the architecture behind those numbers, the failure modes of each, and an honest test for whether you need a mesh at all.

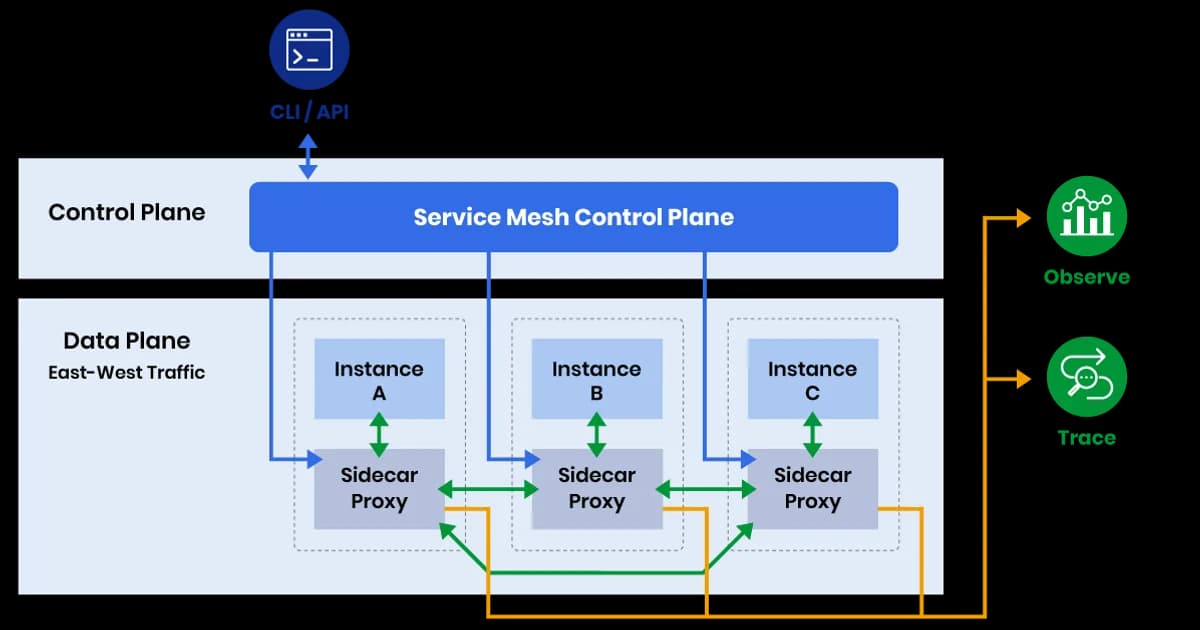

Data Plane vs Control Plane

Every service mesh has two components. Understanding this split is key to understanding everything else.

| Component | What It Does | Istio Implementation | Linkerd Implementation |

|---|---|---|---|

| Data Plane | Intercepts all network traffic, applies policies | Envoy proxy sidecars | Linkerd2-proxy (Rust-based) |

| Control Plane | Configures proxies, issues certificates, collects telemetry | istiod (single binary) | control, destination, identity |

How Sidecar Injection Works

- You label a namespace for automatic injection (e.g.,

istio-injection=enabledorlinkerd.io/inject=enabled) - When a Pod is created, a mutating admission webhook intercepts the request

- The webhook injects a sidecar container (the proxy) into the Pod spec alongside your application container

- An init container configures iptables rules to redirect all traffic through the sidecar

- Your application sends traffic normally -- it doesn't know the proxy exists. All traffic flows through the sidecar transparently.

# Istio: Enable injection on a namespace

kubectl label namespace my-app istio-injection=enabled

# Linkerd: Enable injection on a namespace

kubectl annotate namespace my-app linkerd.io/inject=enabled

# Verify sidecars are running (look for 2/2 ready containers)

kubectl get pods -n my-app

# NAME READY STATUS

# my-app-xyz 2/2 RunningIstio: Feature-Rich but Complex

Istio is the most widely deployed service mesh. It uses Envoy as its data plane proxy and provides an extensive set of features through custom resources.

Istio's Key Features

- Traffic management -- VirtualService and DestinationRule CRDs for canary deployments, traffic splitting, retries, circuit breaking, fault injection

- Security -- automatic mTLS between all services, fine-grained authorization policies (PeerAuthentication, AuthorizationPolicy)

- Observability -- automatic metrics (L7 request rate, latency, error rate), distributed tracing headers, access logs

- Extensibility -- WebAssembly (Wasm) plugins for custom proxy logic without rebuilding Envoy

# Istio: Canary deployment with traffic splitting

apiVersion: networking.istio.io/v1beta1

kind: VirtualService

metadata:

name: my-api

spec:

hosts:

- my-api

http:

- route:

- destination:

host: my-api

subset: v1

weight: 90

- destination:

host: my-api

subset: v2

weight: 10Watch out: Istio's CRD surface area is large -- VirtualService, DestinationRule, Gateway, ServiceEntry, PeerAuthentication, AuthorizationPolicy, EnvoyFilter, Sidecar, and more. Each CRD has dozens of fields. The learning curve is steep, and misconfigurations are easy to make and hard to debug.

Linkerd: Lightweight and Opinionated

Linkerd takes the opposite approach. Instead of exposing every possible knob, it makes opinionated decisions and focuses on being simple to operate.

Linkerd's Key Features

- Automatic mTLS -- enabled by default, zero configuration. Every proxied connection is encrypted.

- Observability -- golden metrics (success rate, latency, throughput) per service, per route. Built-in dashboard.

- Traffic splitting -- via the TrafficSplit CRD (SMI spec) or HTTPRoute (Gateway API)

- Retries and timeouts -- configured via ServiceProfile CRDs

- Multi-cluster -- service mirroring across clusters

# Install Linkerd

curl -sL https://run.linkerd.io/install | sh

linkerd install --crds | kubectl apply -f -

linkerd install | kubectl apply -f -

linkerd checkWhat Linkerd Doesn't Do

Linkerd intentionally omits some Istio features: no Wasm extensibility, no egress traffic management, no built-in rate limiting, limited fault injection. The philosophy is: most teams don't need these features, and including them adds complexity that hurts everyone.

Istio vs Linkerd: Head-to-Head Comparison

| Aspect | Istio | Linkerd |

|---|---|---|

| Data plane proxy | Envoy (C++) | linkerd2-proxy (Rust) |

| Proxy memory overhead | ~50-70 MB per sidecar | ~10-20 MB per sidecar |

| Proxy latency overhead | ~2-5ms p99 | ~1-2ms p99 |

| Control plane memory | ~1-2 GB (istiod) | ~250-500 MB |

| CRD count | 20+ | 5-6 |

| mTLS | Configurable (strict/permissive) | On by default, always |

| Traffic management | Extensive (VirtualService) | Basic (TrafficSplit, HTTPRoute) |

| Learning curve | Steep (weeks to months) | Moderate (days to weeks) |

| CNCF status | Graduated | Graduated |

| Extensibility | Wasm, EnvoyFilter | Policy CRDs only |

Pro tip: If you just need mTLS and observability, Linkerd is the clear winner. It's dramatically simpler to operate, uses less resources, and adds less latency. Choose Istio only if you need advanced traffic management, Wasm extensibility, or you're already invested in the Envoy ecosystem.

For reference: a service mesh is a dedicated infrastructure layer that manages service-to-service communication using lightweight network proxies deployed alongside each workload. It handles traffic routing, load balancing, mutual TLS, observability, and failure recovery without requiring application code changes.

When Is a Service Mesh Justified?

You Probably Need One If

- You have 20+ services and need consistent observability across all of them without instrumenting each one

- Compliance requires encryption in transit (mTLS) between all services, and managing certificates per-service is unsustainable

- You need fine-grained traffic control -- canary releases, circuit breaking, traffic mirroring -- at the infrastructure level

- Multiple teams deploy services independently and you need a standard security/observability baseline

You Probably Don't Need One If

- You have fewer than 10-15 services

- Your services already use application-level TLS and distributed tracing libraries

- You're a single team with full control over all services

- You're still figuring out your microservices boundaries (fix architecture first, then add a mesh)

Performance Impact: Real Numbers

Every sidecar adds latency to every request. At low traffic, the overhead is negligible. At high traffic or tight latency budgets, it matters:

| Metric | No Mesh | Linkerd | Istio |

|---|---|---|---|

| P50 latency | 1.0ms | 1.3ms (+0.3ms) | 1.8ms (+0.8ms) |

| P99 latency | 5.0ms | 6.2ms (+1.2ms) | 8.5ms (+3.5ms) |

| Memory per sidecar | 0 | ~15 MB | ~60 MB |

| CPU per sidecar (idle) | 0 | ~10m | ~50m |

For 100 services, Linkerd sidecars add ~1.5 GB of cluster memory overhead. Istio sidecars add ~6 GB. At cloud pricing, that's the difference between an extra small node and an extra large one.

Pricing and Cost Considerations

| Option | Software Cost | Infrastructure Overhead |

|---|---|---|

| Linkerd (OSS) | Free (Apache 2.0) | Low (~15 MB/sidecar + ~500 MB control plane) |

| Buoyant Enterprise for Linkerd | Custom pricing | Same as OSS + enterprise features |

| Istio (OSS) | Free (Apache 2.0) | Higher (~60 MB/sidecar + ~2 GB control plane) |

| Google Cloud Service Mesh (managed Istio) | Included with GKE Enterprise | Same as Istio OSS |

| AWS App Mesh | Free (uses Envoy) | ~50 MB/sidecar |

| Consul Connect | Free (OSS) / HCP pricing | Moderate |

Failure Modes: What Actually Breaks When You Run a Mesh

Both meshes are mature, but both have sharp edges that only show up under production load.

Istio: istiod OOM During Config Explosion

istiod holds the full mesh configuration in memory. On a cluster with 8,000 services, 200 VirtualServices, and 50 DestinationRules, istiod's memory footprint passed 6 GB and the pod OOMed. During the restart, new pod sidecars could not fetch their bootstrap config and sat in CrashLoopBackOff. Fix: run istiod as a multi-replica Deployment with at least 8 GB limit, and split large meshes across multiple revisions.

Linkerd: Proxy Version Skew After Upgrade

Linkerd's upgrade path requires the control plane to upgrade first, then the proxies. If you forget to restart workloads after a control-plane upgrade, sidecars keep running the old proxy version. Most versions are compatible, but occasionally a feature (like HTTPRoute support) requires both sides. Always run linkerd check --proxy after an upgrade.

Sidecar-Init Race on Pod Startup

Both meshes use an init container to configure iptables. If your application container tries to make an outbound call before the sidecar is ready, the call fails. Short-lived Jobs that do work in main() before the sidecar binds to its port are the classic victim. Solutions: Istio's holdApplicationUntilProxyStarts, Linkerd's native-sidecar support in Kubernetes 1.29+, or retry logic in the app.

AuthorizationPolicy Denies Everything When Misconfigured

An Istio AuthorizationPolicy with an empty rules field defaults to deny-all for everything it selects. I have watched a single-namespace policy with a typo in the selector silently lock out 40 percent of traffic. Always test policies with istioctl x authz check before applying, and stage policies in DRY_RUN mode first.

Migration Walkthrough: Adopting Linkerd on an Existing EKS Cluster

This is the path I have walked twice on production clusters without user-visible downtime.

- Day 1 -- install control plane:

linkerd install --crds | kubectl apply -f -thenlinkerd install | kubectl apply -f -. Verify withlinkerd check. No workloads are meshed yet. - Day 2 -- mesh one non-critical namespace: annotate a dev namespace with

linkerd.io/inject=enabledand restart its deployments. Verify 2/2 containers per pod andlinkerd viz statshowing traffic. - Day 3-7 -- mesh one production namespace per day: start with a namespace that is stateless and idempotent. Roll the deployments one at a time. Watch success rate and p99 latency on the mesh dashboard; roll back the annotation if metrics regress.

- Day 8 -- enforce mTLS: apply a

MeshTLSAuthenticationpolicy requiring mesh identity for the critical namespace. Traffic from unmeshed clients starts failing visibly, which catches anything you missed during the gradual rollout. - Week 3 -- add observability: deploy the Linkerd Viz extension with Prometheus retention configured for 30 days. Wire its metrics into your existing Grafana, not the default Viz dashboard, so on-call uses one tool instead of two.

Frequently Asked Questions

Do I need a service mesh for mTLS?

Not necessarily. You can implement mTLS at the application level using libraries in each service, or use a tool like SPIFFE/SPIRE for certificate management without a full mesh. But if you have many services in different languages, a mesh gives you mTLS universally without touching application code. That's the main value proposition.

Can I use Istio and Linkerd together?

Technically possible but not recommended. Running two meshes means two sets of sidecars (doubling proxy overhead), two control planes, and confusing traffic routing. If you're evaluating both, run each in separate namespaces or clusters during testing, then commit to one.

What is the sidecar resource overhead?

Linkerd's Rust-based proxy uses about 10-20 MB of memory per sidecar. Istio's Envoy proxy uses about 50-70 MB. For a cluster with 100 Pods, that's 1-2 GB (Linkerd) vs 5-7 GB (Istio) of additional memory. CPU overhead is minimal at low traffic but scales with request rate. Factor this into your capacity planning.

Is the sidecar model being replaced?

Partially. Istio introduced an ambient mode that uses per-node ztunnel proxies instead of per-Pod sidecars for L4 (mTLS, authorization). L7 features still use waypoint proxies. Cilium takes a sidecar-less approach using eBPF in the kernel. Both are newer and less battle-tested than the sidecar model, but they're the direction the ecosystem is moving.

How does a service mesh affect debugging?

It adds a layer of indirection. Network issues that were previously between two Pods are now between two Pods and two proxies. Both Istio and Linkerd provide diagnostic tools (istioctl analyze, linkerd check) and proxy-level metrics to compensate. The observability a mesh provides usually makes debugging easier overall, but the initial learning curve is real.

Can I gradually adopt a service mesh?

Yes, and you should. Both Istio and Linkerd support per-namespace injection. Start by meshing one non-critical namespace, verify that traffic flows correctly, check latency impact, and then expand. You can also inject sidecars on individual Pods using annotations. Gradual rollout is the recommended approach.

Conclusion

If you need a service mesh today, start with Linkerd. It's lighter, simpler, and covers the two features most teams actually want: automatic mTLS and golden metrics observability. Evaluate Istio only if you need advanced traffic management, Wasm extensibility, or your cloud provider offers managed Istio. And if you're not sure whether you need a mesh at all -- you probably don't, yet.

Written by

Abhishek Patel

Infrastructure engineer with 10+ years building production systems on AWS, GCP, and bare metal. Writes practical guides on cloud architecture, containers, networking, and Linux for developers who want to understand how things actually work under the hood.

Related Articles

Multi-Cluster Kubernetes: Argo CD ApplicationSet Patterns

When 10+ clusters or 50+ services break hand-written GitOps. ApplicationSet's four generators (cluster list, Git directory, PR, cluster decision), real production patterns (env promotion, per-tenant, multi-region failover, preview envs), and the sharp edges (template debugging, cascading mistakes, RBAC).

11 min read

ContainersKubernetes GPU Scheduling: DRA, KAI Scheduler, MIG

Dynamic Resource Allocation replaced device plugins for GPU claims in Kubernetes 1.34. KAI Scheduler adds gang scheduling and queues. MIG slices H100s into 7 isolated tenants. Full production setup with the NVIDIA GPU Operator, topology-aware training, and when to use MIG vs MPS vs time-slicing.

17 min read

CI/CDProgressive Delivery with Argo Rollouts: Canary + Analysis

Argo Rollouts replaces Kubernetes Deployments with a CRD that does weighted canary, metric-gated analysis, and automatic rollback. Production recipe, Prometheus AnalysisTemplates, and a side-by-side with Flagger.

15 min read

Enjoyed this article?

Get more like this in your inbox. No spam, unsubscribe anytime.